Introductions

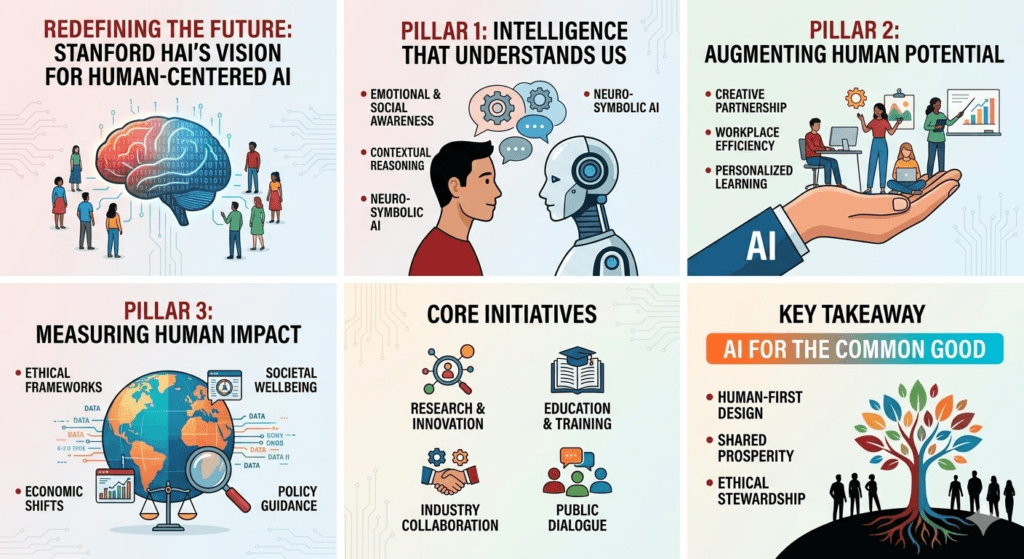

As artificial intelligence shifts from speculative promise to daily utility, the Stanford Institute for Human-Centered AI (HAI) is leading a global “moonshot” to ensure this technology serves as a collaborator, not a replacement.

Guided by co-directors Fei-Fei Li and James Landay, HAI’s 2026 vision moves beyond the hype of chatbots to focus on three core pillars that redefine our relationship with machine intelligence.

Artificial Intelligence is transforming the way societies function—impacting healthcare, finance, education, and governance. However, the ethical and societal implications of such powerful technologies often lag behind technical advancements. Recognizing this gap, Stanford University established the Stanford Institute for Human-Centered Artificial Intelligence (HAI) to ensure that AI serves the public good.

The rapid development of artificial intelligence (AI) presents unprecedented opportunities and challenges. The Stanford Institute for Human-Centered Artificial Intelligence (HAI) pioneers a multidisciplinary approach to ensure AI technologies benefit humanity. By combining research, policy, education, and ethics, Stanford HAI aims to steer AI development in a direction that prioritizes societal values and human flourishing.

What Is Human-Centered AI?

At its core, human-centered AI is not about replacing people—it’s about enhancing human potential.

According to Stanford HAI, this approach focuses on:

- Augmenting human abilities

- Addressing real societal needs

- Drawing inspiration from human intelligence

Rather than building systems that operate independently of humans, HAI envisions AI as a collaborative partner—supporting decision-making, creativity, and productivity.

The Origin of Stanford HAI

Founded in 2019 at Stanford University, Stanford HAI was created as an interdisciplinary hub bringing together:

- Computer scientists

- Policymakers

- Social scientists

- Humanists

- Industry leaders

Its mission is clear: advance AI research, education, policy, and practice to improve the human condition .

This interdisciplinary model reflects a critical insight:

AI is not just a technical challenge—it’s a societal transformation.

The Core Vision: AI That Serves Humanity

Stanford HAI’s vision is built on three foundational principles:

1. Augmentation Over Automation

Instead of replacing jobs, HAI promotes AI systems that:

- Automate repetitive tasks

- Free humans for higher-level thinking

- Enhance creativity and innovation

This aligns with the broader idea that AI should expand human capability, not diminish it.

2. Ethical, Fair, and Transparent AI

HAI emphasizes that future AI systems must be:

- Ethically aligned with human values

- Fair and unbiased across populations

- Transparent and explainable

This is critical in areas like healthcare, law, and finance, where opaque AI decisions can have serious consequences.

3. Interdisciplinary Collaboration

Unlike traditional AI labs, Stanford HAI integrates expertise from:

- Law and governance

- Medicine and biology

- Economics and public policy

- Philosophy and ethics

This ensures AI systems are designed with a holistic understanding of human impact.

Real-World Impact: From Theory to Practice

Stanford HAI is not just a research initiative—it actively shapes the global AI ecosystem.

Policy & Governance

HAI works with governments and policymakers to:

- Develop responsible AI regulations

- Provide data-driven insights (e.g., AI Index reports)

- Guide ethical deployment of AI systems

Education & Leadership

The institute trains:

- Future AI leaders

- Policymakers

- Business executives

Helping them understand both the technical and ethical dimensions of AI.

Research & Innovation

From healthcare diagnostics to climate monitoring, HAI explores how AI can:

- Improve lives in underserved communities

- Enable better decision-making

- Address global challenges

The Role of Visionary Leadership

A key figure behind this movement is Fei-Fei Li, a pioneer in modern AI and co-director of Stanford HAI.

Her philosophy emphasizes:

- Human dignity in AI development

- Diversity in AI talent

- Responsible innovation at scale

Her work underscores a central belief:

The future of AI must be guided by human values, not just technical capability.

Why Stanford HAI’s Vision Matters Now

As AI becomes more powerful—with generative models, autonomous systems, and decision-making algorithms—the risks also grow:

- Bias and inequality

- Job displacement fears

- Lack of transparency

- Ethical dilemmas

Stanford HAI’s framework offers a counterbalance:

- AI as a tool for empowerment

- Innovation aligned with societal good

- Technology guided by human-centered design

The Future: Human + AI, Not Human vs AI

Stanford HAI envisions a future where:

- Humans and AI collaborate seamlessly

- AI systems are trustworthy and aligned

- Innovation benefits everyone—not just a few

This is not just a technological shift—it’s a philosophical redefinition of intelligence itself.