IIn the rush to adopt AI across enterprises and products, one stubborn issue keeps popping up: hallucinations — the confident but incorrect responses generated by AI systems. And it’s time to shift how we think about them. They are not just model quirks or training glitches. They are a system design problem.

Artificial Intelligence is evolving rapidly. From chatbots to enterprise copilots, AI is transforming how we work, search, build, and automate.

Yet one major issue continues to dominate conversations:

Hallucinations.

AI systems confidently generating incorrect, fabricated, or misleading information.

But according to a powerful LinkedIn insight shared by Rathnakumar Udayakumar, hallucinations are not merely a model defect — they are fundamentally a system design problem.

And that distinction changes everything.

Why Hallucinations Happen

When AI systems “hallucinate,” the model fills missing gaps with statistically plausible text because the system architecture forces it to respond, even when reliable information isn’t available. A large language model (LLM) doesn’t innately verify truth before answering — it predicts the most likely continuation given the input sequence. When the inputs are weak or context is missing, the model defaults to plausible guesses rather than factual accuracy.

This means hallucinations are less about the size of the model and more about how the system is set up to handle uncertainty and context.

From Model Fixes to Architecture Fixes

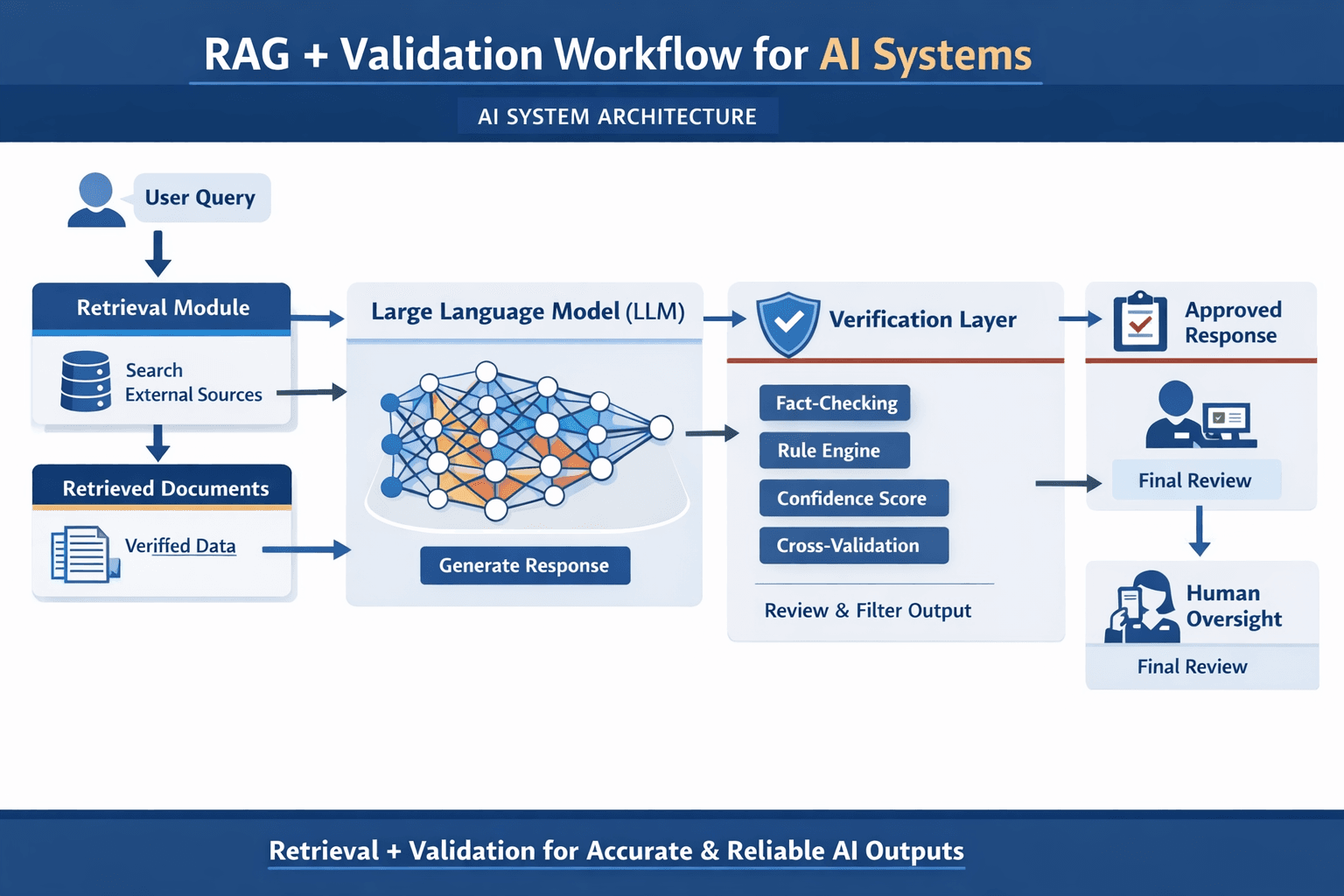

Instead of endlessly tuning parameters or crafting clever prompts, the focus needs to expand to the architecture surrounding the model — the system that controls what goes in and what comes out. Here are six practical approaches to reduce hallucinations in real-world AI applications:

1. Retrieval-Augmented Generation (RAG)

Use RAG pipelines to ground responses in external verified data. Instead of relying solely on an LLM’s internal knowledge, retrieve information from trusted, up-to-date sources and let the model generate answers based on that foundation.

2. Structured Output Formats

Constrain outputs using schemas (e.g., JSON templates, required fields). This makes outputs predictable and easier to validate programmatically, reducing the chances of free-form misinformation.

3. Prompt Clarity

Ambiguous or underspecified prompts make it more likely the system will fill gaps with invented facts. Clear instructions — defining roles, expected structure, and edge cases — reduces guesswork.

4. Lower Temperature and Sampling Settings

Controlling generation randomness (via settings like temperature or top-p sampling) can improve factual consistency — especially where accuracy outweighs creativity.

5. Verification Layers

Layer extra checks — rule engines, confidence scores, fact validators, or cross-referencing mechanisms — before presenting a response to users. These filters catch unreliable answers early.

6. Human-in-the-Loop Oversight

For high-stakes scenarios, don’t automate blindly. Human reviewers, audit logs, and escalation paths act as safety nets to prevent false, risky information from causing harm.

The Bigger Shift: System Accountability

The biggest takeaway is this: hallucination isn’t solved by bigger or “smarter” models; it’s mitigated by better systems. When your AI stack is architected to control inputs, structure outputs, and validate results, hallucination becomes a manageable engineering challenge rather than an unpredictable flaw.

IIn complex, regulatory, or mission-critical environments — such as legal, medical, or enterprise workflows — this architectural vigilance isn’t optional; it’s essential for trust, compliance, and safe adoption. Once systems are designed to work with uncertainty — not against it — hallucination isn’t just reduced — it becomes traceable and governable.

🔎 What Is an AI Hallucination?

In technical terms, hallucination occurs when a Large Language Model (LLM) generates content that is syntactically plausible but factually incorrect.

Why?

Because LLMs are probabilistic systems. They predict the most likely next token based on patterns — not truth validation.

If the system architecture:

- Lacks verified context

- Forces the model to always produce an answer

- Doesn’t include validation layers

…then hallucination becomes inevitable.

This is not a “bug.” It is a design outcome.

🧠 The Core Insight: Models Don’t Verify Truth — Systems Must

A model alone cannot:

- Check real-time databases

- Validate legal compliance

- Confirm updated statistics

- Understand business-specific constraints

That responsibility belongs to the system around the model.

When organizations treat hallucinations as a tuning issue instead of an architectural flaw, they miss the real solution.

🏗️ 6 System Design Strategies to Reduce AI Hallucinations

Let’s move from theory to execution.

1️⃣ Retrieval-Augmented Generation (RAG)

Instead of relying solely on the model’s internal training data, integrate external verified sources.

How it works:

- Retrieve relevant documents from trusted databases

- Feed that context into the model

- Generate grounded responses

This dramatically reduces fabricated outputs.

Best for:

- Enterprise knowledge bases

- Legal documentation

- Customer support systems

2️⃣ Structured Output Constraints

Free-form text increases hallucination risk.

Constrain responses using:

- JSON schemas

- Field validation rules

- Mandatory citations

- Predefined templates

Structured outputs are easier to verify programmatically.

3️⃣ Prompt Engineering with Explicit Boundaries

Ambiguous prompts invite invented answers.

Strong prompts:

- Define role clearly

- Specify output format

- Instruct the model to say “I don’t know” when uncertain

- Limit scope of response

Precision reduces guesswork.

4️⃣ Lower Temperature & Sampling Controls

High temperature = more creativity, more variability

Low temperature = more determinism, more consistency

For factual systems, use conservative generation parameters.

Creativity is optional. Accuracy is not.

5️⃣ Add Verification & Guardrails

Think of this as AI middleware.

Add:

- Fact-check APIs

- Rule engines

- Cross-model validation

- Confidence scoring systems

Responses should pass through a quality filter before reaching users.

6️⃣ Human-in-the-Loop Oversight

In high-stakes environments — healthcare, finance, law — human validation is critical.

Use:

- Approval workflows

- Escalation triggers

- Audit logs

Automation without governance is risk.

⚠️ Why This Matters for Businesses

If you’re deploying AI in:

- Healthcare

- Financial services

- Legal tech

- SaaS products

- Government systems

…hallucinations are not just inconvenient — they are liability risks.

A poorly designed AI system can:

- Damage brand credibility

- Cause compliance violations

- Lead to financial loss

- Erode customer trust

Architectural accountability is no longer optional.

🚀 The Bigger Shift: From “Smarter Models” to “Smarter Systems”

Many companies believe upgrading to a larger model will fix hallucinations.

It won’t.

The future belongs to organizations that design:

- Controlled input pipelines

- Retrieval-grounded architectures

- Multi-layer validation systems

- Transparent audit frameworks

Hallucination management is a systems engineering discipline.

And the companies that understand this early will lead the AI era.