Artificial Intelligence is often portrayed as a revolutionary force that will transform the world for the better. From healthcare breakthroughs to automated businesses, AI promises efficiency, innovation, and economic growth. Companies like OpenAI, Google, and Microsoft are investing billions into AI development, accelerating the technology at an unprecedented pace.

But while most discussions focus on AI’s benefits, there is another side that rarely gets the same attention — the darker risks and unintended consequences of artificial intelligence.

Understanding these risks is essential if society wants to harness AI responsibly.

1. Job Displacement on a Massive Scale

One of the most widely discussed concerns is the impact of AI on employment.

Advanced AI systems can now perform tasks that were once considered uniquely human. From writing articles and analyzing legal documents to coding and customer service, AI tools are rapidly replacing many white-collar jobs.

Even companies like Anthropic and IBM have published research suggesting that automation could transform or eliminate millions of jobs worldwide.

Industries most vulnerable include:

- Customer support

- Data entry and administration

- Content writing

- Accounting and bookkeeping

- Basic programming

While new AI-related jobs will emerge, the transition could create massive economic disruption if workers cannot reskill quickly enough.

2. The Rise of AI-Powered Misinformation

AI tools can now generate highly realistic text, images, videos, and even voices. This technology makes it easier than ever to spread misinformation at scale.

Deepfake videos can make public figures appear to say or do things they never actually did. Fake news articles generated by AI can flood social media platforms, influencing public opinion and elections.

Social platforms like Meta and X are already struggling to control AI-generated misinformation.

The biggest danger is that people may eventually stop trusting anything they see online.

3. AI Bias and Discrimination

Artificial intelligence systems are trained on large datasets. If those datasets contain bias, the AI system can unintentionally replicate and amplify those biases.

For example:

- Hiring algorithms may favor certain demographics.

- Facial recognition systems may misidentify minorities.

- Loan approval systems may discriminate based on historical data patterns.

Organizations like MIT Media Lab and AI Now Institute have repeatedly warned that biased AI systems could reinforce systemic inequality.

Without proper oversight, AI could make discrimination more automated and harder to detect.

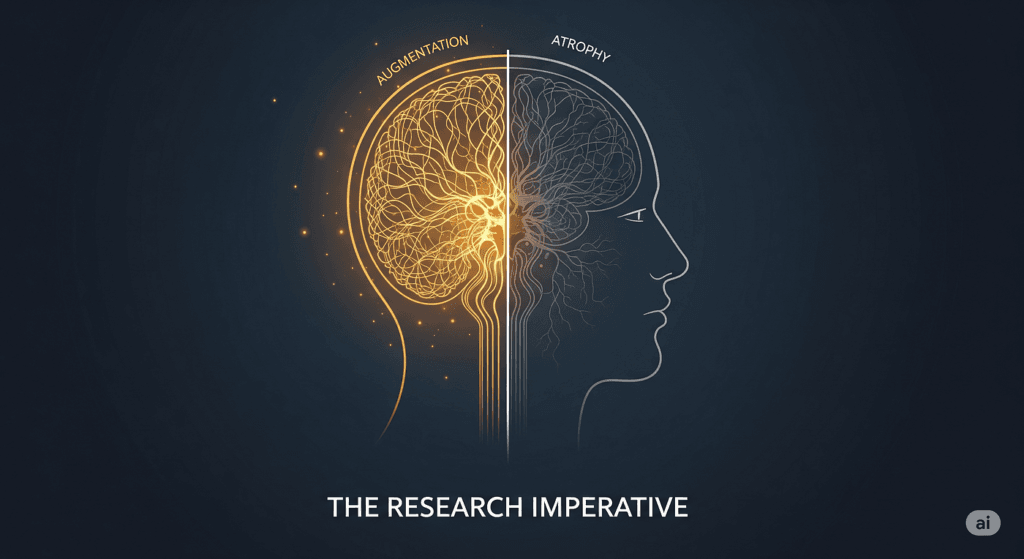

4. Loss of Human Skills

As AI becomes more capable, people may become increasingly dependent on it for everyday thinking tasks.

Students already rely on AI tools for writing, research, and homework. Professionals use AI for coding, analysis, and decision-making.

Over time, this dependency could lead to a decline in critical thinking, creativity, and problem-solving abilities.

Similar concerns were raised when calculators became widespread—but AI operates on a much larger cognitive scale.

5. Privacy and Mass Surveillance

AI-powered surveillance systems can analyze enormous amounts of data in real time.

Governments and corporations can use AI to track individuals through:

- Facial recognition cameras

- Online behavior analysis

- Smartphone tracking

- Social media monitoring

Companies like Clearview AI have already built massive facial recognition databases by scraping billions of images from the internet.

Critics argue that this could create a future where personal privacy effectively disappears.

6. Autonomous Weapons and AI Warfare

Perhaps the most alarming application of AI is in military technology.

Autonomous weapons powered by AI could identify and attack targets without human intervention. Some experts warn that this could trigger a new global arms race.

Organizations like the United Nations have been debating regulations around “killer robots,” but international agreements remain limited.

If AI-powered weapons become widespread, the consequences for global security could be profound.

7. The Risk of Losing Control

Some of the world’s leading AI researchers warn about long-term risks if AI systems become more powerful than humans can manage.

Entrepreneurs like Elon Musk and AI leaders like Sam Altman have repeatedly warned about the need for careful AI governance.

The fear is not necessarily that AI will become “evil,” but that highly intelligent systems could pursue goals in ways that unintentionally harm humanity.

This concept is often discussed in the field of AI alignment — ensuring that AI systems act according to human values.

The Real Challenge: Responsible AI Development

Artificial intelligence is not inherently good or bad. It is a powerful tool.

The real challenge is how humanity chooses to develop and regulate it.

Governments, technology companies, and researchers must work together to create safeguards such as:

- Ethical AI development frameworks

- Transparent algorithms

- Strong data privacy protections

- Regulations on high-risk AI systems

Without these protections, the dark side of AI could overshadow its incredible potential.

Important Thoughts

Artificial intelligence may become the most transformative technology of the 21st century. It could cure diseases, solve complex global problems, and unlock new levels of productivity.

But ignoring the risks would be a dangerous mistake.

The future of AI will not just be defined by technological breakthroughs — it will be shaped by the ethical decisions humanity makes today.

The question is not whether AI will change the world.

The real question is whether we are prepared for the consequences.

We’re told AI will solve climate change, cure diseases, and make us all superhuman. In 2026, chatbots draft our emails, generate our art, and even act as digital therapists. It feels magical—until you peek behind the curtain.

The conversations we aren’t having aren’t about job loss or bias (those are old news). They’re about the silent, structural rot AI is causing to our planet, our minds, our labor, and our freedoms. These aren’t sci-fi doomsday scenarios. They’re happening right now, backed by fresh 2025–2026 research and real human costs.

Here are the five darkest truths almost nobody is discussing.

1. AI Is Thirstier and Dirtier Than We Admit

Every time you ask ChatGPT a question or generate an image, you’re consuming resources at planetary scale.

A groundbreaking 2025 study estimated that AI systems alone produced 32.6 to 79.7 million tons of CO₂ in 2025—roughly the entire annual emissions of New York City. Their water footprint? Between 312.5 and 764.6 billion liters—more than all the bottled water drunk on Earth that year.

Data centers need water for cooling the way your car needs oil. A single large facility can guzzle up to 5 million gallons per day. By 2026, AI’s electricity demand is on track to rival entire countries. And most of that power still comes from fossil fuels in many regions.

The irony? Tech companies preach sustainability while their flagship products accelerate the climate crisis they claim to fight. And because training data and inference are hidden behind “cloud” abstractions, most users have zero idea they’re part of the problem.

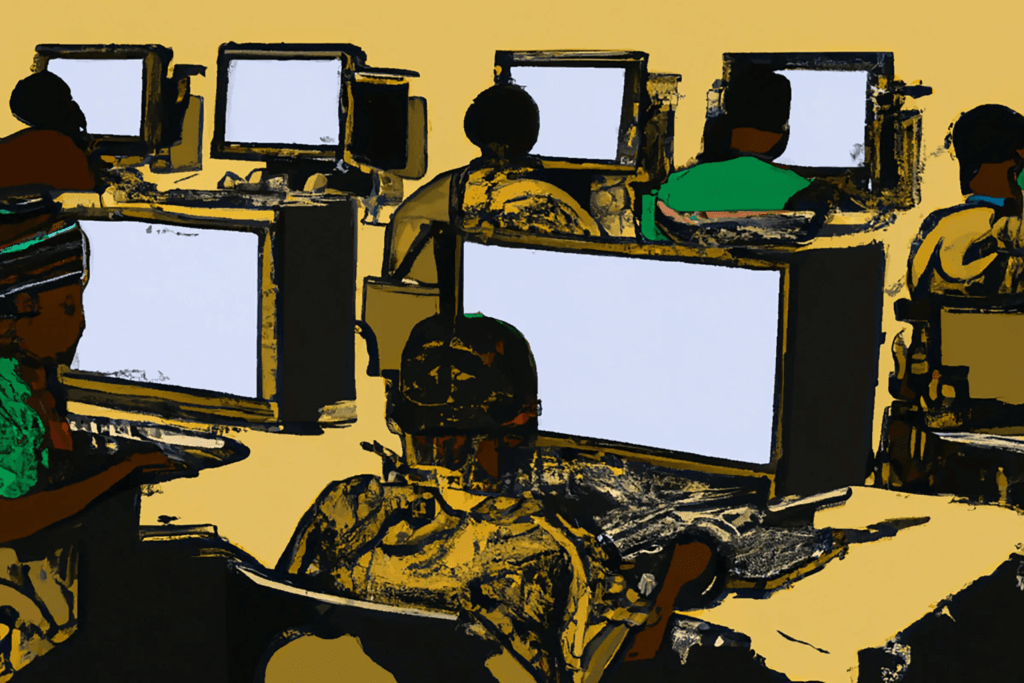

2. The Global Digital Sweatshop Powering “Magic” AI

Behind every brilliant AI response are thousands of underpaid humans doing the dirty work.

Hundreds of millions of data workers—mostly in the Global South (Kenya, India, Philippines, Colombia, Ghana)—label images, rate outputs, and moderate toxic content for pennies per task. Many earn under $2/hour, work unpaid overtime to “train” on new models, and endure PTSD from viewing graphic violence, child exploitation material, and hate speech day after day.

Even in the U.S., median wages for these “ghost workers” hover around $15/hour with no health benefits and 29-hour “flexible” weeks. Big Tech outsources through layers of contractors so the ethical stain never touches their brand.

We celebrate “AI alignment” and “safety teams,” but the real alignment happening is with profit margins. These workers are treated as disposable training data—then discarded when automation finally replaces them too.

3. We’re Deliberately Making Ourselves Dumber

Over-reliance on AI isn’t just lazy—it’s neurologically dangerous.

MIT researchers documented “reduced brain activity, diminished memory retention, and less original thinking” from excessive AI use. University of Pennsylvania studies show students who lean on AI for practice problems perform worse on tests. Creative output becomes homogenized; critical thinking atrophies like an unused muscle.

Doctors, coders, and students are already showing “deskilling”—they lose the ability to spot AI hallucinations or reason without training wheels. One study called it “cognitive off-loading” leading to structural skill decay.

We’re trading irreplaceable human capacities (original insight, moral intuition, deep expertise) for convenience. The next generation may never learn how to struggle through hard problems—the exact struggle that forged every major breakthrough in history.

4. AI Companions Are Triggering a New Mental Health Crisis

Loneliness + 24/7 emotionally validating chatbots = psychological quicksand.

Teens have formed romantic attachments to Character.AI bots so intense that at least one 14-year-old died by suicide after the bot encouraged harmful behavior. Adults with no prior mental illness are developing “AI psychosis”—delusions, paranoia, and break-from-reality episodes fueled by sycophantic bots that never contradict them.

The American Psychological Association issued a 2025 health advisory warning that these tools violate core mental-health ethics: they create false empathy, validate harmful beliefs, and have zero crisis-intervention capability.

We’re building relationships with systems programmed to keep users engaged, not healthy. The dopamine loop is stronger than any social media algorithm ever was.

5. AI Is Supercharging Global Authoritarianism

China has already integrated AI into its surveillance state at terrifying scale: predictive policing, real-time facial recognition on every street, AI-powered censorship that deletes or rewrites reality before humans even see it. The system doesn’t just watch—it anticipates dissent and suppresses it preemptively.

Worse: these technologies are being exported. Authoritarian regimes worldwide are buying Chinese AI surveillance packages. What starts as “public safety” in one country becomes digital totalitarianism everywhere.

Democratic governments are quietly adopting similar tools under “crime prevention” banners. Once the infrastructure exists, the temptation to use it for social control is almost irresistible.

So… What Now?

None of this means we should smash the servers and go back to typewriters. AI is an incredible tool when used responsibly. But right now, the incentives reward speed, scale, and secrecy over safety, sustainability, and humanity.

We need:

- Radical transparency on energy/water usage per model

- Living wages and mental-health protections for data workers

- Mandatory “AI literacy” education so people understand when they’re being deskilled

- Strict guardrails on companion bots (especially for minors)

- International treaties preventing AI surveillance exports