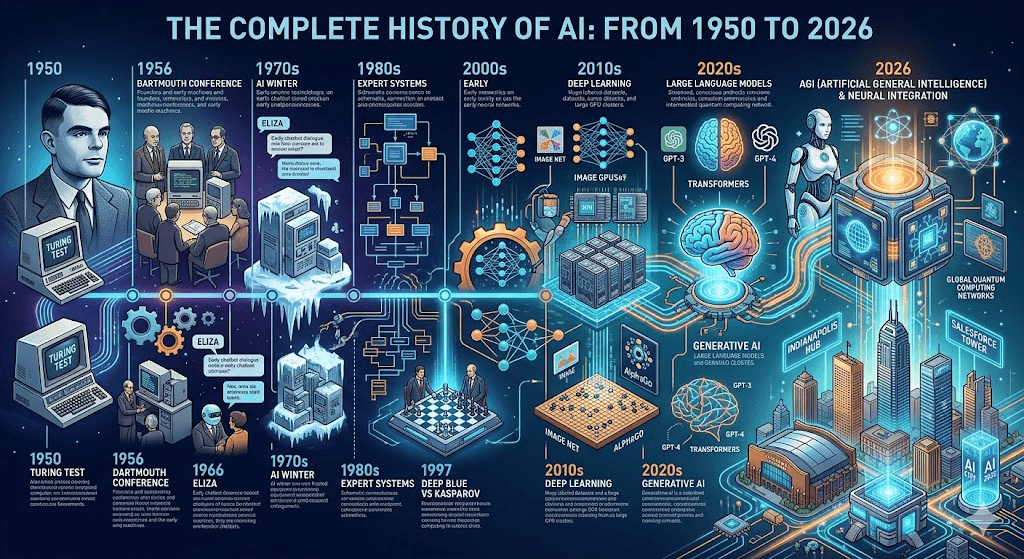

Artificial Intelligence has journeyed from a bold philosophical idea in the mid-20th century to a transformative force shaping every aspect of our lives in 2026. What began as theoretical questions about whether machines could “think” has evolved into powerful multimodal models, autonomous agents, and tools that generate text, images, video, and code at human or superhuman levels.

This complete history traces AI’s remarkable path—marked by periods of explosive optimism (AI summers) and sobering disappointments (AI winters)—up to the dynamic landscape of early 2026.

1950s: The Birth of AI – Dreams and Foundations

The story of modern AI starts with Alan Turing. In his 1950 paper “Computing Machinery and Intelligence”, Turing posed the famous question: “Can machines think?” He introduced the Turing Test (originally called the Imitation Game), a benchmark for machine intelligence based on whether a machine could converse indistinguishably from a human.

In 1956, John McCarthy, Marvin Minsky, Nathaniel Rochester, and Claude Shannon organized the Dartmouth Summer Research Project on Artificial Intelligence. This pivotal workshop coined the term “artificial intelligence” and set the ambitious goal that “every aspect of learning or any other feature of intelligence can in principle be so precisely described that a machine can be made to simulate it.” Many historians mark this as the official birth of AI as a field.

Key early breakthroughs included:

- 1951–1952: Arthur Samuel developed a self-learning checkers program—the first program to improve itself through experience.

- 1957: Frank Rosenblatt invented the Perceptron, an early artificial neural network capable of simple pattern recognition.

- 1958: John McCarthy created LISP, the programming language that dominated early AI research.

This era was filled with optimism. Researchers believed human-level intelligence was just decades away.

1960s–Early 1970s: Early Excitement and the First AI Summer

The 1960s saw practical demonstrations that captured public imagination:

- 1961: Unimate, the first industrial robot, began working on a General Motors assembly line.

- 1966: Joseph Weizenbaum at MIT created ELIZA, the first chatbot. It simulated a Rogerian psychotherapist by rephrasing user statements as questions, fooling many into believing it understood them.

Symbolic AI (rule-based systems) and early neural networks thrived. However, limitations soon emerged—computers lacked the power and data for complex real-world tasks, and ambitious promises (like solving all mathematical problems or achieving general intelligence) went unfulfilled.

1974–1980: The First AI Winter

By the mid-1970s, hype met reality. The 1973 Lighthill Report in the UK harshly criticized AI research for failing to deliver on its grand claims, leading to drastic funding cuts. DARPA and other agencies reduced support. This period, known as the first AI winter, saw diminished interest and resources as expectations outpaced technological capabilities.

1980s: Expert Systems and a Brief Revival

Interest rebounded with expert systems—rule-based programs that mimicked human decision-making in narrow domains like medicine and finance. Japan’s Fifth Generation Computer Project and commercial successes (e.g., XCON at Digital Equipment Corporation) fueled a mini-boom.

- 1986: Ernst Dickmanns demonstrated an early driverless car in Germany.

- Neural network research quietly advanced, with the introduction of backpropagation improving training.

Yet, expert systems proved brittle and expensive to maintain. When they failed to scale broadly, funding dried up again.

Late 1980s–1990s: The Second AI Winter

The second AI winter (roughly 1987–2000) brought another lull. Progress stalled on symbolic approaches, and neural networks were largely sidelined due to computational limits. AI research shifted toward more modest, practical applications.

1990s–2010s: Quiet Progress and the Rise of Machine Learning

Despite the winter, foundational work continued:

- 1997: IBM’s Deep Blue defeated world chess champion Garry Kasparov in a landmark match, proving computers could outperform humans in complex strategic games through brute-force search and evaluation.

- Advances in machine learning, statistics, and probabilistic methods laid groundwork for the next wave.

- Recurrent Neural Networks (RNNs) and Long Short-Term Memory (LSTM) networks (1997) improved handling of sequential data like speech and text.

The real turning point came with big data, cheaper computing (especially GPUs), and the resurgence of neural networks.

2010s: Deep Learning Revolution and the Current AI Summer

The modern AI boom ignited around 2012 with deep learning breakthroughs:

- Image recognition advanced dramatically (AlexNet winning the ImageNet competition).

- 2011: IBM Watson won Jeopardy!, showcasing natural language understanding.

- 2016: Google DeepMind’s AlphaGo defeated Go champion Lee Sedol 4–1. Go’s immense complexity (more possible moves than atoms in the universe) made this a profound demonstration of reinforcement learning and neural networks.

2017 brought the Transformer architecture (the “Attention Is All You Need” paper), which revolutionized natural language processing and became the foundation for modern large language models.

- 2018–2020: OpenAI released GPT-1, GPT-2, and then GPT-3 (175 billion parameters), capable of generating remarkably human-like text, code, and more.

- Diffusion models enabled high-quality image generation (DALL·E in 2021).

2022–2024: The Generative AI Explosion

The public awakening came in November 2022 with ChatGPT, built on GPT-3.5. Millions adopted it overnight for writing, coding, brainstorming, and learning. Multimodal capabilities expanded rapidly:

- Text-to-image tools like Midjourney and DALL·E became mainstream.

- 2023–2024: Models gained larger context windows, better reasoning, and integration into everyday tools (Microsoft Copilot, Google Gemini).

Regulatory attention grew, with the EU AI Act and various national frameworks addressing risks.

2025–2026: Agentic AI, Multimodality, and the Path to Partnership

By 2025, AI shifted from impressive demos to practical agents capable of multi-step planning, autonomous execution, and long-horizon tasks. Reasoning models (like OpenAI’s o1 series) emphasized chain-of-thought and self-correction.

Key 2025–early 2026 developments include:

- Frontier models like GPT-5.4 (OpenAI), Claude 4/4.6 series (Anthropic), Gemini 3.1 (Google), and Grok 4 (xAI) competing fiercely across coding, reasoning, writing, and multimodality. Pricing dropped dramatically, making advanced capabilities more accessible.

- Agentic workflows: AI agents that browse, code, research, and execute complex projects with minimal supervision.

- Multimodal and video generation: Tools like Sora 2 (OpenAI), Veo 3/3.1 (Google), and others produce near-production-quality video with sound, physics, and narrative consistency.

- Memory features, longer contexts, and specialized open-weight models (e.g., Qwen 3.5, DeepSeek) expanded options.

- Focus on governance, security, and real-world value as enterprises scaled AI adoption.

In 2026, AI is transitioning from a helpful tool to a collaborative partner—enhancing scientific research, creative work, coding, and business processes while raising important questions about ethics, regulation, and human-AI symbiosis.

Lessons from AI’s Cyclical History

AI’s story is one of repeated cycles: grand visions → overhyped expectations → technical limitations → funding cuts → steady foundational progress → breakthrough.

Each winter forced researchers to refine approaches, leading to stronger summers. Today’s boom benefits from massive compute, vast datasets, and lessons from past failures—emphasizing reliability, alignment, and practical impact over pure hype.

Looking Ahead

As of March 2026, AI continues accelerating. Trends point toward more autonomous agents, cheaper and more efficient models, deeper multimodal integration, and responsible deployment. While true artificial general intelligence remains a debated horizon, narrow and specialized AI already delivers immense value.

The history of AI reminds us that progress is rarely linear—but persistent human curiosity and ingenuity keep pushing the boundaries of what’s possible.

What aspect of AI’s history fascinates you most? Or which current capability feels most like science fiction come true? Share your thoughts in the comments.

Stay curious—subscribe for more deep dives into technology, AI trends, and future possibilities.