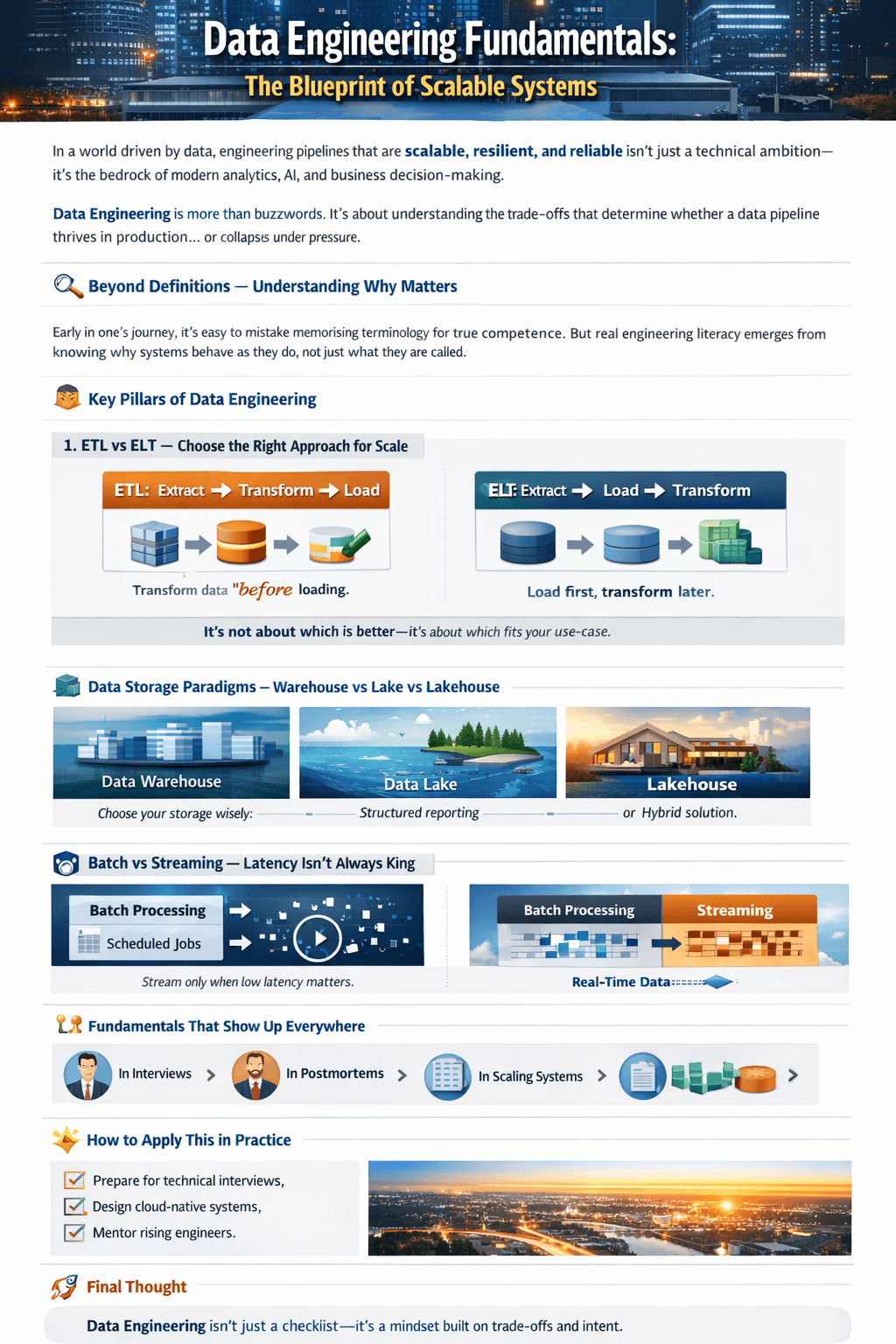

IIn a world driven by data, engineering pipelines that are scalable, resilient, and reliable isn’t just a technical ambition—it’s the bedrock of modern analytics, AI, and business decision-making. Data Engineering is more than buzzwords. It’s about understanding the trade-offs that determine whether a data pipeline thrives in production… or collapses under pressure.

🔍 Beyond Definitions — Understanding Why Matters

Early in one’s journey, it’s easy to mistake memorizing terminology for true competence. But real engineering literacy emerges from knowing why systems behave as they do, not just what they are called. This distinction, echoed in foundational concepts, is what separates a novice from an impactful engineer.

🧠 Key Pillars of Data Engineering

1. ETL vs ELT — Choose the Right Approach for Scale

- ETL (Extract, Transform, Load):

- Transforms data before loading it.

- Optimal when compute is expensive or transformations are heavy.

- ELT (Extract, Load, Transform):

- Loads data first and transforms later.

- Powerful in cloud environments where compute scales on demand.

👉 It’s not about which is better — it’s about which fits your use-case.

2. Data Storage Paradigms — Warehouse vs Lake vs Lakehouse

Understanding the storage layer of your data architecture is non-negotiable:

- Data Warehouse: Designed for structured, analytics-ready reporting.

- Data Lake: Flexible repository for raw, unstructured data.

- Lakehouse: Attempts to balance performance with flexibility — but only succeeds with strong governance.

3. Batch vs Streaming — Latency Isn’t Always King

Batch processing still powers most analytical workloads.

- Use streaming only when low latency delivers measurable business value.

- Otherwise, streaming adds complexity without return.

4. OLTP vs OLAP — Understand Your Workload

Mixing online transaction processing with analytic workloads leads to operational chaos. These paradigms are distinct, and misalignment can quickly erode both performance and reliability.

📌 The Invisible Forces of Reliable Systems

A pipeline rarely fails because of code. Instead, failures often trace back to:

- Poor dependency handling

- Lack of orchestration and retries

- Unspecified service-level agreements (SLAs)

- Data quality blind spots

Those aren’t development problems — they’re engineering decisions.

🧰 Fundamentals That Show Up Everywhere

These building blocks aren’t abstract:

- In the interview room

- In incident postmortems

- In dashboards that silently break

- In systems that scale over time

They shape everything that comes after.

✨ How to Apply This in Practice

Whether you’re:

- Preparing for technical interviews,

- Designing cloud-native systems, or

- Mentoring a rising engineer,

revisit these foundational concepts often. They’ll ground decisions that matter more than any syntax cheat sheet.

🚀 Final Thought

Data Engineering isn’t a checklist of skills — it’s a mindset built on understanding trade-offs, system design, and intent. Master the fundamentals, and everything else becomes a tool, not a crutch