IIn today’s technology landscape, data engineering is often equated with mastering the latest frameworks, pipelines, and platforms. But as Vivek Pandey insightfully points out on LinkedIn, this tool-centric lens misses what truly defines excellence in the field.

The Temptation of Tools

It’s easy to be enamored with Spark, Airflow, dbt, Kafka, Snowflake, and every new entrant in the modern data stack. These tools are powerful — they help us ingest, process, transform, and serve vast volumes of data. But expertise in tools alone doesn’t equate to engineering mastery.

This tool-focused mindset often leads to engineers who can operate pipelines but struggle to explain why systems behave under load, manage reliability at scale, or architect data platforms that outlive any specific framework.

Fundamentals: The Core of Engineering

What separates a tool user from an engineer is a deep understanding of fundamental principles — the architecture and logic that make systems robust, efficient, and maintainable. According to Khandelwal, these fundamentals include:

- Data Modeling & Architecture: Knowing how OLTP differs from OLAP, when to use star or snowflake schemas, and how medallion architecture shapes data flow.

- Distributed Systems Principles: Concepts like partitioning, sharding, and the CAP theorem are not academic — they explain why your system performs (or fails) under stress.

- Pipeline Design: Recognizing the nuances between batch and streaming, enforcing idempotency, and building systems that don’t break under scale.

- Performance Engineering: Utilizing query plans, proper file formats like Parquet, and smart partitioning isn’t “tuning at the end” — it’s architectural planning.

- Governance and Reliability: Metrics like SLAs, data quality, schema evolution, and observability are essential if stakeholders are to trust the data.

- Cost and Scalability: True scalability isn’t just adding nodes — it’s architecting a system where compute and storage work in harmony without runaway costs.

These pillars of fundamentals compound over time. Tools come and go, but deep principles carry engineers from merely running systems to designing systems.

Why Fundamentals Matter More Than Ever

In an era of rapid innovation, trends shift quickly. Yesterday’s popular tool can become tomorrow’s legacy system. But the why behind data movement — how data should be modeled for trust and clarity, how distributed systems behave, and how to build pipelines that are resilient, observable, and cost-efficient — remains stable over time.

Engineers who anchor themselves in fundamentals don’t just build for today’s stack; they are equipped to adapt gracefully as technology evolves. They’re the ones who can:

- Diagnose root causes instead of patching symptoms.

- Communicate architectural decisions clearly to stakeholders.

- Anticipate how systems behave under unexpected load.

- Design solutions that balance performance, reliability, and cost.

From Tool Users to Architects

Becoming a senior engineer, architect, or thought leader isn’t about accumulating badges for every tool in the ecosystem. It’s about developing a system-level mindset:

Understand the problem deeply before selecting the tool. Build with intent, not by trend.

This shift is what elevates someone from writing code that works to crafting systems that endure

1️⃣ Technical Version

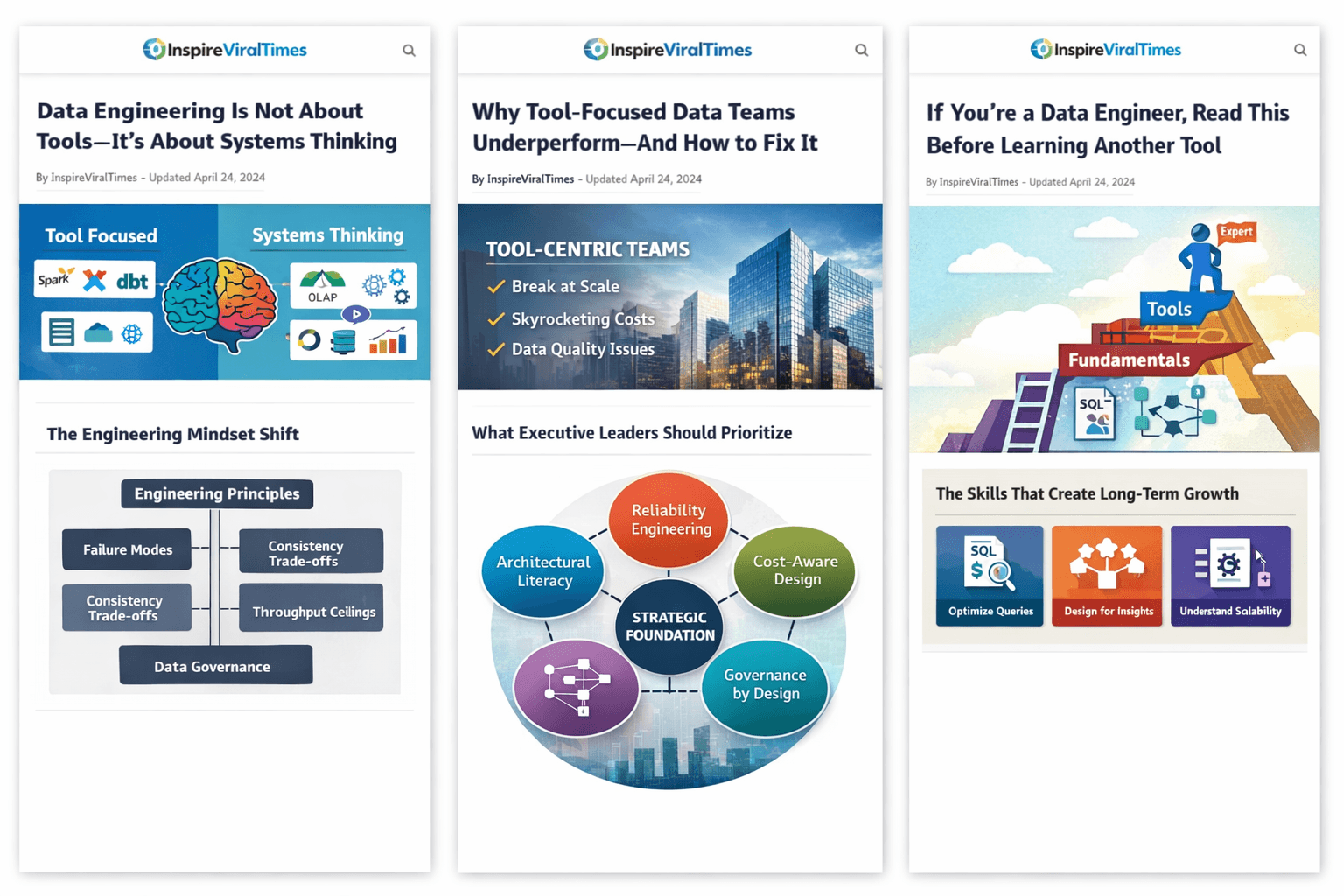

Data Engineering Is Not About Tools — It’s About Systems Thinking

In modern data teams, tool fluency is often mistaken for engineering maturity. Spark certifications, dbt macros, Airflow DAGs — they look impressive. But real data engineering depth lies beneath the tooling layer.

As highlighted by Riya Khandelwal, most engineers are tool-focused; few are fundamentals-focused. And that distinction defines long-term impact.

The Tool Trap

The modern stack evolves rapidly:

- Distributed compute engines

- Cloud-native warehouses

- Streaming frameworks

- ELT orchestration platforms

But frameworks abstract complexity — they don’t eliminate it. Without understanding distributed systems behavior, storage engines, and query planning, engineers become operators rather than architects.

The Foundational Layer That Actually Matters

1. Data Modeling Strategy

Understanding OLTP vs OLAP workloads, dimensional modeling, normalization trade-offs, and fact grain design determines downstream performance and usability more than any transformation tool.

2. Distributed Systems Principles

Partitioning, replication, consensus models, fault tolerance — these concepts explain:

- Why your Spark job skews

- Why Kafka consumers lag

- Why warehouse queries spill to disk

3. Idempotent & Reliable Pipeline Design

Retries, late-arriving data, deduplication strategies, schema evolution, backfills — these are architectural decisions, not tool settings.

4. Storage & Compute Economics

Columnar formats (Parquet/ORC), compression strategies, clustering keys, and workload isolation directly affect cloud spend and scalability.

5. Observability & Data Contracts

Metrics, lineage, SLAs, and quality validation transform pipelines into production-grade systems.

The Engineering Mindset Shift

Senior data engineers think in:

- Failure modes

- Consistency trade-offs

- Throughput ceilings

- Data lifecycle governance

Tools change. Fundamentals compound.

Master the abstraction layers beneath the toolchain — and you’ll design systems that survive technology churn.

2️⃣ Executive Version

Why Tool-Focused Data Teams Underperform — And How to Fix It

Data is now a core strategic asset. Yet many organizations invest heavily in tools while underinvesting in engineering fundamentals.

As observed by Riya Khandelwal, many data engineers are tool-focused rather than fundamentals-focused. This distinction has business consequences.

The Hidden Cost of Tool-Centric Thinking

When teams prioritize tools over architecture:

- Pipelines break during scale

- Cloud costs spiral unexpectedly

- Data quality erodes stakeholder trust

- New integrations require rework

The issue isn’t technology — it’s system design maturity.

What Executive Leaders Should Prioritize

1. Architectural Literacy

Teams should understand distributed systems, data modeling, and performance engineering — not just dashboards and connectors.

2. Reliability Engineering

Data SLAs, observability frameworks, incident response playbooks, and lineage tracking protect decision integrity.

3. Cost-Aware Design

Efficient partitioning, workload isolation, and compute optimization reduce unnecessary cloud burn.

4. Governance by Design

Data contracts, schema versioning, and quality automation must be built into pipelines — not retrofitted.

Strategic Insight

Tools are replaceable. Architecture is durable.

Organizations that cultivate engineering fundamentals:

- Scale more predictably

- Maintain stakeholder trust

- Reduce technical debt

- Improve cross-functional agility

The competitive advantage lies not in adopting the newest platform — but in building teams that understand the principles behind them.

3️⃣ Career Development Version

If You’re a Data Engineer, Read This Before Learning Another Tool

It’s tempting to chase the newest certification, framework, or cloud badge. But as Riya Khandelwal points out, mastering tools alone won’t make you exceptional.

The real career accelerator? Fundamentals.

Why Tools Won’t Make You Senior

Anyone can learn syntax.

Few understand system behavior.

If you don’t know:

- Why partitioning affects performance

- Why distributed systems trade consistency for availability

- Why schema design impacts analytics speed

—you’re limiting your ceiling.

The Skills That Create Long-Term Growth

🔹 Deep SQL and Query Optimization

Understand execution plans, indexing, clustering, and cost models.

🔹 Data Modeling Expertise

Learn dimensional modeling and business logic translation.

🔹 Distributed Systems Basics

Study fault tolerance, CAP theorem, and scaling strategies.

🔹 Pipeline Resilience

Design for retries, idempotency, and late data.

🔹 Business Context Awareness

Engineering impact grows when you align with revenue, operations, or product outcomes.

The Career Truth

Tools get you hired.

Fundamentals get you promoted.

When you think in systems rather than scripts, you transition from “data engineer” to “data architect” or “technical leader.”