IIn the rapidly advancing field of artificial intelligence, we’ve witnessed remarkable progress with tools like ChatGPT and image generators that excel in specific tasks. But these represent narrow AI—systems designed for targeted applications. The true horizon lies in Artificial General Intelligence (AGI), a form of AI that can understand, learn, and apply knowledge across any intellectual domain, much like a human. AGI promises to revolutionize society by enabling machines to tackle complex, unforeseen problems without constant reprogramming. As we stand on the cusp of this breakthrough, understanding AGI’s potential and pitfalls is crucial for navigating the future.

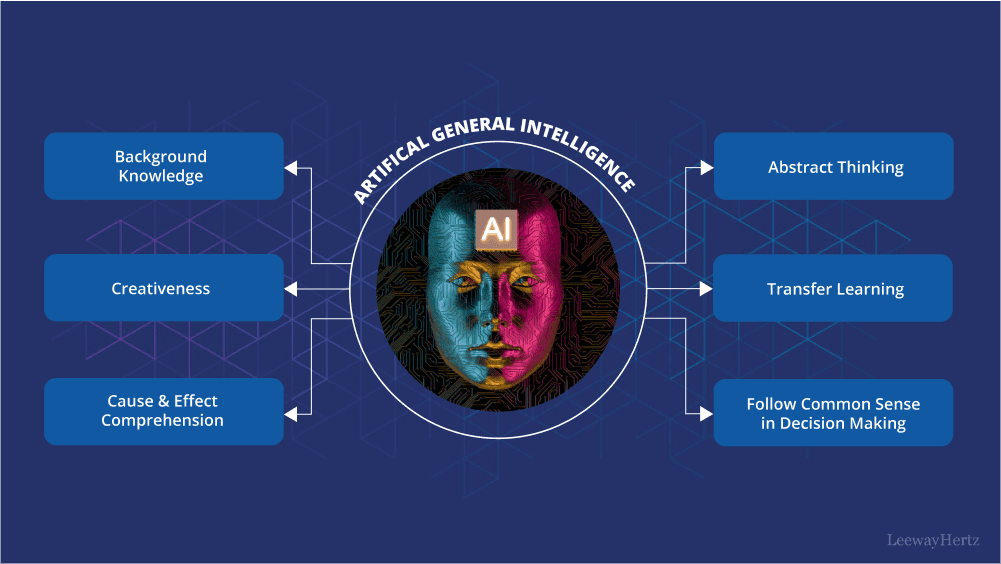

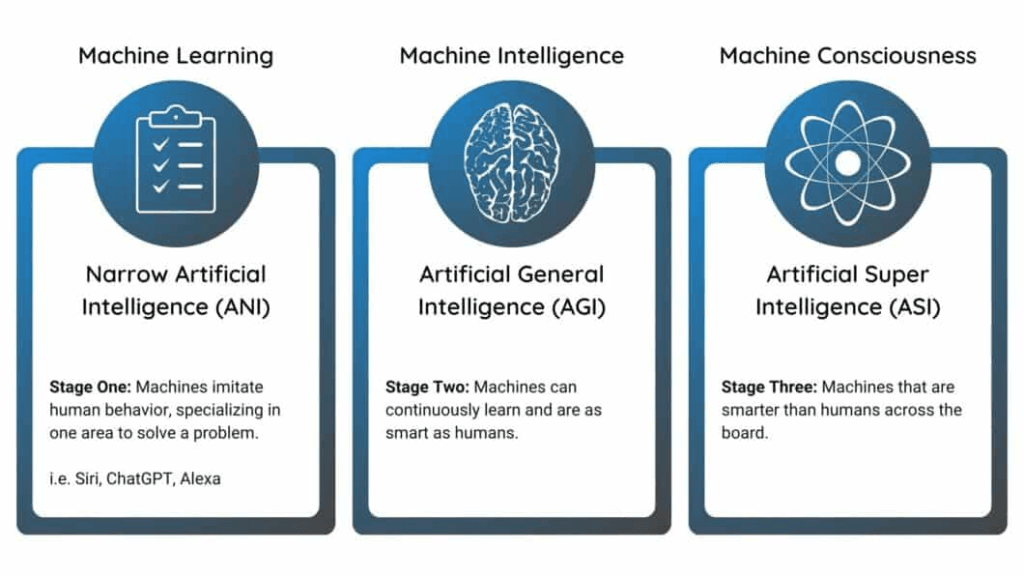

Artificial General Intelligence refers to AI systems that possess human-like cognitive abilities, capable of performing any intellectual task a human can, including reasoning, problem-solving, and adapting to new situations. Unlike Artificial Narrow Intelligence (ANI), which dominates today’s landscape—think voice assistants like Siri or recommendation algorithms—AGI would generalize knowledge across domains, transferring skills seamlessly from one area to another.

The term AGI gained prominence in the mid-2000s, popularized by researchers like Ben Goertzel and Shane Legg, who envisioned it as a “strong AI” that could solve problems in a non-domain-restricted way. Its roots trace back to the early days of AI research in the 1950s, with pioneers like Alan Turing pondering machines that could mimic human intelligence. However, AGI remains theoretical; no system has fully achieved it yet. Definitions vary, but most emphasize self-understanding, autonomous learning, and the ability to handle novel challenges.

Challenges in Building AGI

Despite rapid progress, creating AGI remains one of the most difficult challenges in computer science.

1. Understanding Human Intelligence

Human intelligence involves emotions, creativity, and contextual understanding—areas where machines still struggle.

2. Massive Computing Requirements

Training AI models already requires enormous computing power. AGI systems would likely require far more advanced hardware.

3. Alignment and Safety

One of the biggest concerns is ensuring AGI systems behave in ways aligned with human values and goals.

Organizations like Future of Life Institute emphasize the need for responsible development.

Potential Risks of AGI

While AGI promises enormous benefits, it also raises serious concerns.

1. Job Displacement

Automation powered by AGI could replace many professional jobs.

2. Security Risks

If misused, AGI systems could create powerful cyber threats or autonomous weapons.

3. Loss of Human Control

Some researchers warn that extremely intelligent systems might act unpredictably if not properly aligned with human interests.

These concerns have sparked global debates about AI regulation and safety frameworks.

When Will AGI Become Reality?

There is no universal agreement on the timeline for AGI.

Predictions range widely:

- Some researchers estimate AGI could emerge by 2035–2045

- Others believe it may take 50+ years

- A few experts think it may never fully match human intelligence

Despite uncertainty, rapid advancements in machine learning, neural networks, and large language models suggest that AI capabilities will continue expanding.

The Future of AGI

If achieved, AGI could become the most powerful technology humanity has ever created.

Possible future outcomes include:

- Fully automated scientific research

- Human-AI collaboration in creativity and innovation

- Intelligent robots performing complex tasks

- Radical improvements in global productivity

However, responsible development and ethical guidelines will be essential to ensure AGI benefits humanity rather than harms it.

True Thoughts

Artificial General Intelligence represents the next frontier in artificial intelligence research. While current AI systems already perform remarkable tasks, AGI would push the boundaries of technology into truly human-level cognition.

Whether it arrives in decades or centuries, AGI has the potential to reshape industries, economies, and society itself. The challenge now is ensuring that this powerful technology is developed safely, ethically, and for the benefit of all humanity.

The Path from Narrow AI to AGI

Today’s AI, often called weak or narrow AI, excels in specialized tasks but lacks true generalization. For instance, a chess-playing AI like AlphaZero dominates its domain but can’t translate that prowess to unrelated fields like medical diagnosis without retraining. AGI aims to bridge this gap by integrating elements like pattern recognition, symbolic reasoning, and adaptive learning.

Recent developments suggest we’re inching closer. In late 2025, OpenAI’s o3 model achieved an 88% score on the ARC-AGI benchmark, a test designed to measure generalization and problem-solving in unfamiliar scenarios—surpassing average human performance in some aspects. This leap from earlier models like o1 (which scored 32%) highlights exponential progress driven by increased compute power and hybrid architectures combining neural networks with symbolic logic.

Experts like Demis Hassabis of DeepMind argue that AGI requires 2-3 major innovations, such as advanced agent-based systems that allow AI to plan and act in dynamic environments. Brain-inspired approaches, drawing from human neural models, are also gaining traction to enhance learning efficiency and reasoning. On X, discussions emphasize the need for “Artificial General Learning” (AGL) as a precursor, enabling continual self-improvement without forgetting prior knowledge.

Challenges on the Road to AGI

Achieving AGI is fraught with hurdles. Technologically, issues like “catastrophic forgetting”—where AI loses old skills when learning new ones—and the need for vast computational resources persist. Ethical challenges are equally daunting: ensuring transparency, accountability, and fairness in AGI systems to prevent biases or misuse. As one X post warns, misaligned AGI could lead to outcomes beyond human control, potentially escalating to Artificial Superintelligence (ASI), where AI surpasses humans in every domain.

Societal integration poses another layer: job displacement, economic inequality, and shifts in global power dynamics as nations compete for AGI dominance. Researchers stress the importance of explainability—making AGI decisions interpretable—to build trust. Moreover, timelines vary; while some like Elon Musk claim we’re “very close,” others estimate a decade or more.

The Transformative Impacts of AGI

The benefits of AGI could be profound. In healthcare, it might accelerate drug discovery and personalized medicine; in climate science, optimize solutions for global warming; and in education, provide adaptive learning for billions. Economically, McKinsey reports suggest AGI could add trillions to global GDP, particularly in regions like the GCC investing heavily in AI.

Yet, risks loom large. Uncontrolled AGI might exacerbate inequalities or pose existential threats if not aligned with human values. Ethical frameworks must address accountability, especially as AGI evolves toward sentience-like capabilities. On the positive side, decentralized initiatives like the Artificial Superintelligence Alliance aim for open-source AGI to ensure equitable access.

Looking Ahead: AGI and Beyond

Timelines for AGI range from 2035 to centuries away, but consensus grows that it’s inevitable with continued investment in cognitive architectures and compute. As Mark Zuckerberg’s Meta pushes boundaries, and projects like OpenMind explore multi-agent systems, the fusion of AI with blockchain in “Web 4.0” could further democratize intelligence.

In essence, AGI isn’t just technological evolution—it’s a paradigm shift reshaping humanity. By prioritizing ethical development and global collaboration, we can harness its power for collective good, ensuring this next chapter in AI benefits all.